This comprehensive report details the rigorous methodologies employed by the Pew Research Center’s American Trends Panel (ATP) for two significant data collection efforts: Wave 166, conducted between March 24-30, 2025, and Wave 170, carried out from May 5-11, 2025. These waves, crucial for understanding evolving public opinion and societal trends in the United States, were designed to capture nuanced perspectives across a diverse demographic landscape. The findings from these waves will inform a wide array of analyses on critical issues facing the nation.

Understanding the Pillars of American Trends Panel Research

The American Trends Panel (ATP) stands as a cornerstone of Pew Research Center’s commitment to delivering high-quality, data-driven insights into American society. This nationally representative panel comprises randomly selected U.S. adults, meticulously recruited and maintained to reflect the complexities of the American populace. The methodology underpinning each wave of the ATP is designed to ensure both the breadth of representation and the depth of statistical accuracy, allowing researchers to draw robust conclusions about public attitudes, behaviors, and experiences. The data presented here is a testament to the meticulous planning and execution involved in maintaining such a vital research instrument.

Wave 166: Capturing a Snapshot of American Sentiment

Overview and Response Rates

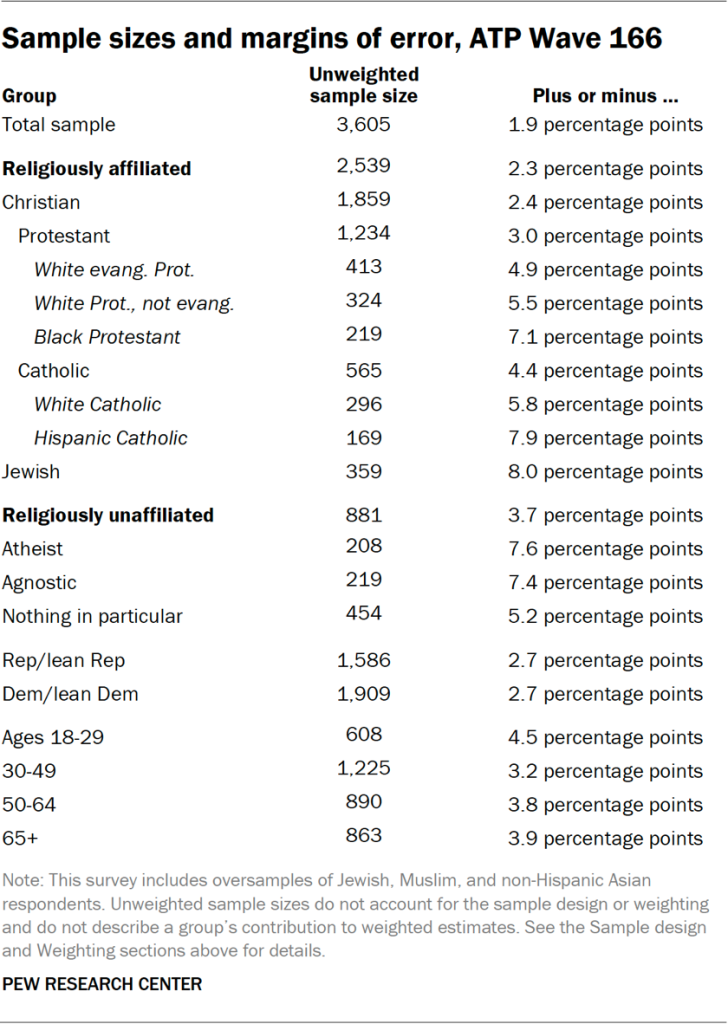

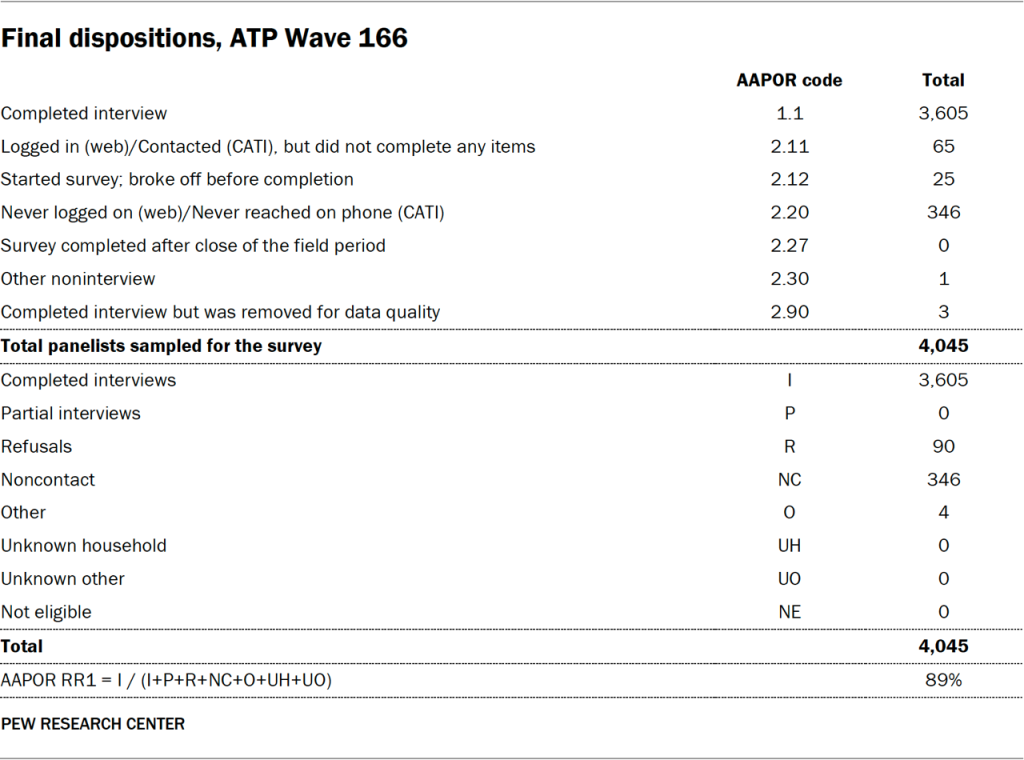

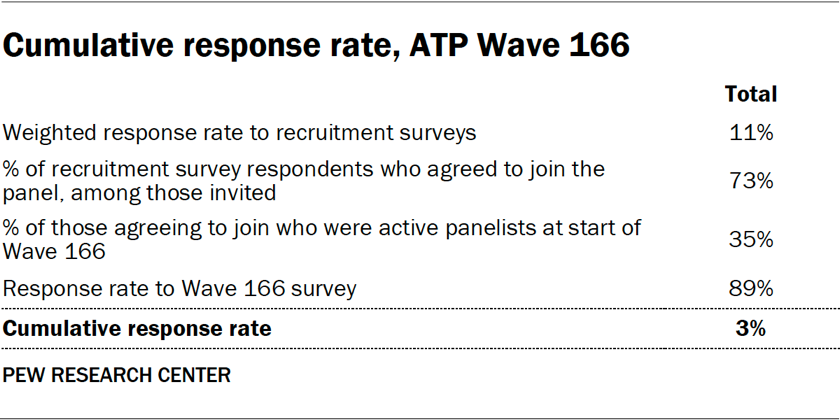

Wave 166 of the ATP survey, executed from March 24 to March 30, 2025, successfully engaged a substantial portion of its panel. A total of 3,605 panelists responded out of 4,045 who were sampled, achieving a survey-level response rate of 89%. This high response rate is indicative of the panel’s established trust and the effectiveness of Pew Research Center’s engagement strategies. The cumulative response rate, which accounts for initial recruitment challenges and ongoing panel attrition, stands at 3%. Furthermore, the break-off rate among panelists who began the survey was remarkably low, at just 1%, suggesting a high level of engagement with the survey content. The margin of sampling error for the full sample of 3,605 respondents is a precise plus or minus 1.9 percentage points at the 95% confidence level, underscoring the statistical reliability of the findings.

Ensuring Diverse Representation

To ensure that the opinions and experiences of smaller demographic subgroups are accurately captured, Wave 166 employed oversampling techniques for Jewish, Muslim, and non-Hispanic Asian adults. This strategic approach allows for more precise estimates of these populations’ views, which might otherwise be diluted in a standard national sample. It is crucial to note that these oversampled groups are subsequently weighted back to their correct proportions within the overall U.S. adult population, maintaining the integrity of national representativeness.

Data Collection and Infrastructure

The survey for Wave 166 was conducted by SSRS on behalf of Pew Research Center, utilizing a dual approach of online (n=3,460) and live telephone (n=145) interviewing. This blended methodology caters to diverse respondent preferences and technological access, ensuring broader reach. Interviews were conducted in both English and Spanish, further enhancing inclusivity. For those interested in the foundational aspects of the ATP, further details are available in the "About the American Trends Panel" resource.

Recruitment and Sample Design

Since 2018, the ATP has adopted an address-based sampling (ABS) methodology for panel recruitment. This process involves mailing study cover letters and pre-incentives to a stratified, random sample of households selected from the U.S. Postal Service’s Computerized Delivery Sequence File, a file estimated to cover 90% to 98% of the U.S. population. Within each sampled household, the adult with the next birthday is invited to participate. Prior to 2018, recruitment relied on landline and cellphone random-digit-dial surveys. The ATP has consistently recruited national samples of U.S. adults annually since 2014, with targeted oversampling efforts for underrepresented groups in specific years, such as Hispanic, Black, and Asian adults in 2019, 2022, and 2023, respectively.

The sample design for Wave 166 specifically targeted noninstitutionalized persons aged 18 and older residing in the United States. It utilized a stratified random sample from the ATP, with Jewish, Muslim, and non-Hispanic Asian adults being selected with certainty. The remaining panelists were sampled to ensure their proportions in the survey strata closely mirrored their representation in the U.S. adult population. Respondent weights are meticulously adjusted to account for any differential selection probabilities.

Questionnaire Development and Quality Control

The questionnaire for Wave 166 was a collaborative effort between Pew Research Center and SSRS. Rigorous testing of the web-based survey program was conducted by both the SSRS project team and Pew Research Center researchers across various devices (PC and mobile) to ensure seamless functionality. Test data was analyzed to verify the logic and randomizations before the survey’s launch. To uphold data integrity, Pew Research Center researchers conducted thorough data quality checks, identifying and removing three respondents who exhibited patterns of satisficing, such as excessively high rates of unanswered questions or consistent selection of the first or last answer option.

Incentives and Data Collection Protocol

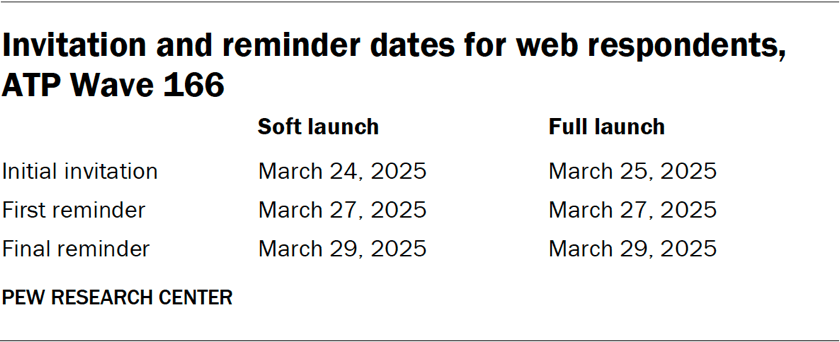

To encourage participation, all respondents were offered a post-paid incentive, redeemable as a check or a gift code for major online retailers. Incentive amounts varied ($5 to $20) based on the demographic’s accessibility, aiming to boost participation among harder-to-reach groups. The data collection field period for Wave 166 spanned March 24-30, 2025. Online participants received email invitations and up to two email reminders. Panelists who consented to SMS messages received similar invitations and reminders via text. For telephone surveys, prenotification postcards were mailed on March 21, followed by a soft launch on March 24. All remaining sampled phone panelists were contacted throughout the field period, with up to six calls made by trained SSRS interviewers.

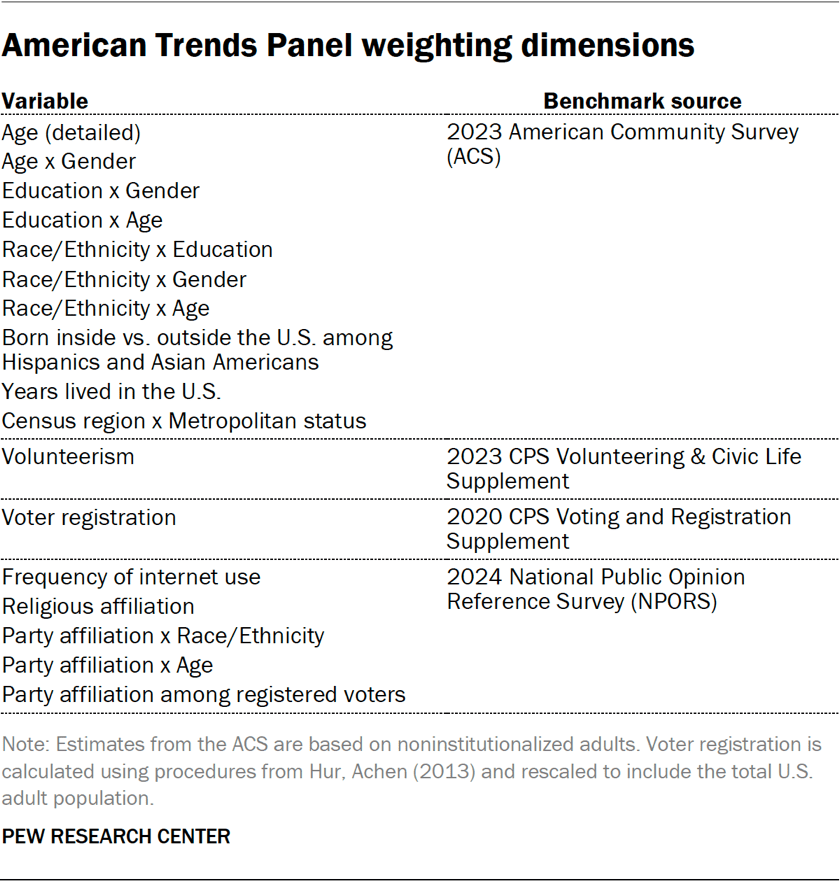

Weighting and Margin of Error

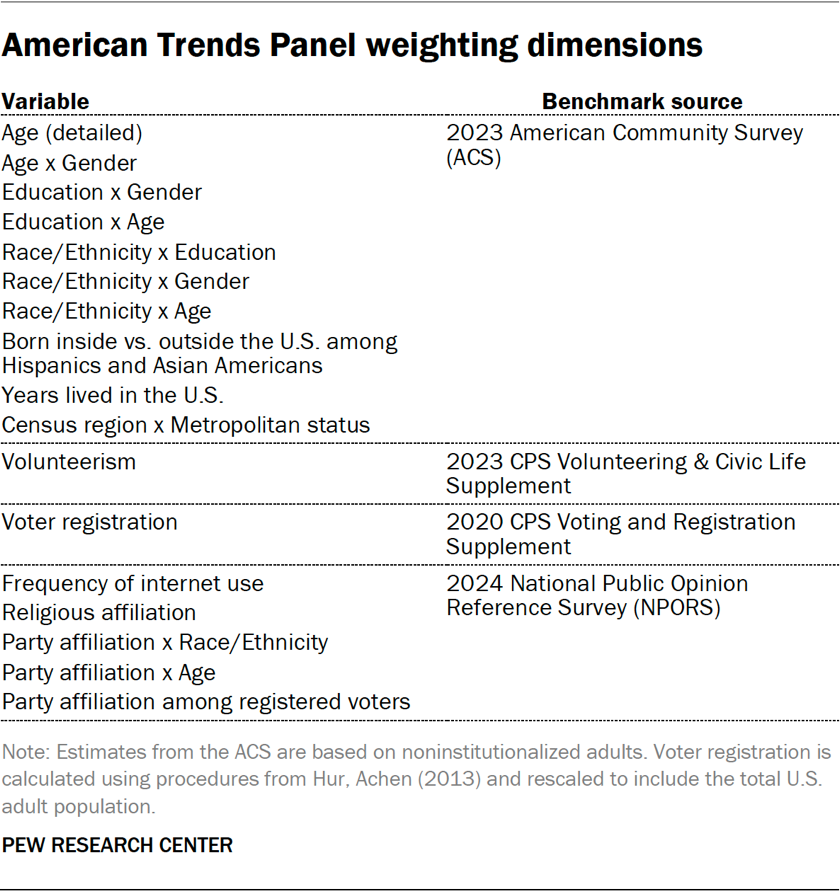

The weighting process for ATP data is a multi-stage procedure accounting for sampling and nonresponse at various points. Each panelist receives a base weight reflecting their recruitment probability, which is then calibrated against population benchmarks to correct for recruitment nonresponse and panel attrition. If a subsample was invited, weights are adjusted accordingly. Sampling errors and statistical significance tests consider the effect of this weighting. For Wave 166, the margin of sampling error for the full sample of 3,605 respondents was ±1.9 percentage points. Detailed tables outlining unweighted sample sizes and margins of error for various subgroups are available upon request.

Wave 170: Expanding the Reach and Refining Data Collection

Overview and Enhanced Response Rates

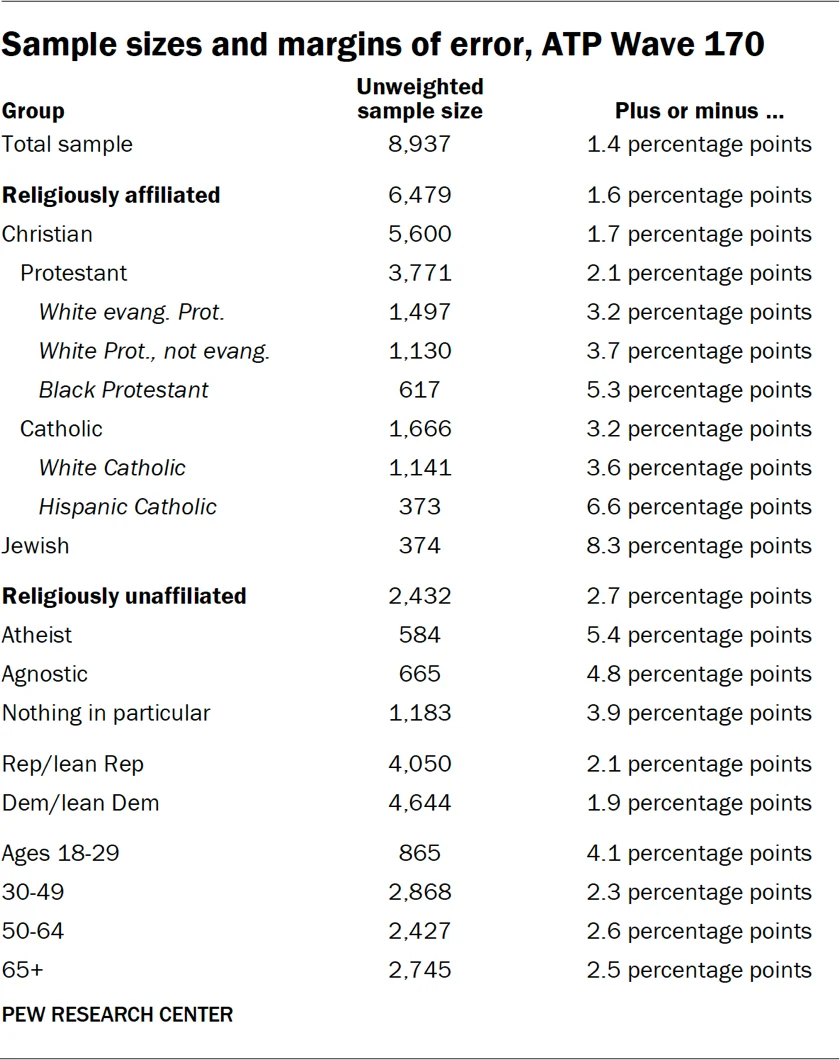

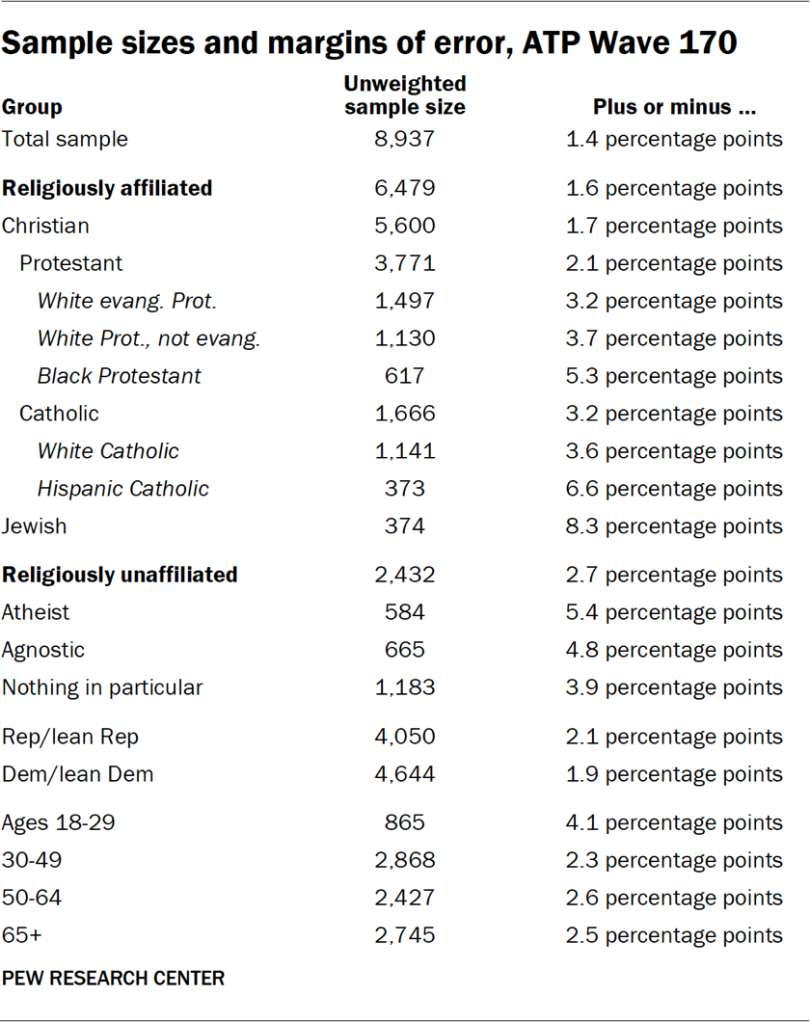

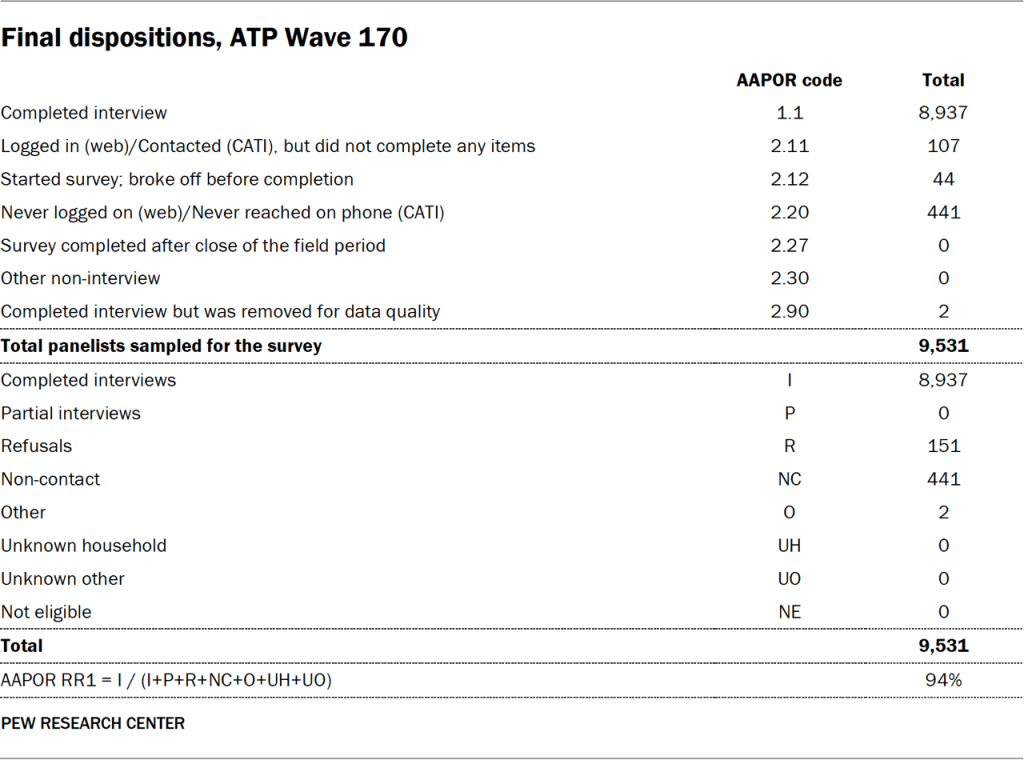

Wave 170 of the ATP, conducted from May 5 to May 11, 2025, demonstrated an even higher level of respondent engagement, with 8,937 panelists responding out of 9,531 sampled, achieving a remarkable survey-level response rate of 94%. This elevated response rate further bolsters the reliability of the data collected. The cumulative response rate remained consistent at 3%, and the break-off rate was less than 1%, indicating strong participant commitment. The margin of sampling error for the larger sample of 8,937 respondents is ±1.4 percentage points at the 95% confidence level, providing an even more precise statistical measure.

Data Collection Framework

Similar to Wave 166, Wave 170 was conducted by SSRS via online (n=8,720) and live telephone (n=217) interviews, in both English and Spanish. This consistent methodology ensures comparability across different waves and maintains the high standards of data collection.

Sample Design and Panel Engagement

For Wave 170, the target population remained noninstitutionalized persons aged 18 and older in the United States. A key aspect of this wave’s design was the invitation of all active ATP members who had previously completed Wave 162. This approach leverages the established panel and aims for continuity in data collection from a familiar respondent pool. Respondent weights are adjusted to reflect differential selection probabilities.

Quality Assurance and Incentives

The questionnaire development and testing process for Wave 170 mirrored that of Wave 166, involving collaboration between Pew Research Center and SSRS, and rigorous technical evaluation. Data quality checks were again performed, leading to the removal of two respondents who exhibited satisficing behaviors. The incentive structure remained consistent, offering post-paid incentives to encourage participation and differential amounts to maximize engagement across various demographic groups.

Data Collection Timeline and Protocols

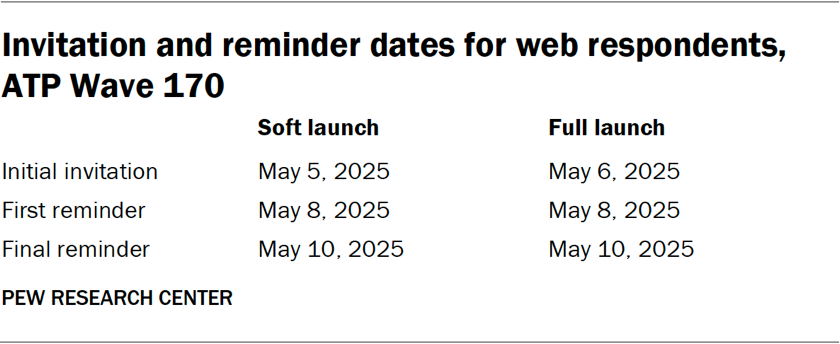

The data collection for Wave 170 took place from May 5-11, 2025. Online participants received postcard notifications on May 5, with survey invitations sent in a soft launch (60 panelists) starting May 5, followed by a full launch on May 6 for all remaining English and Spanish-speaking online panelists. Email and SMS reminders were utilized to maximize response rates. For telephone surveys, prenotification postcards were sent on May 2, with a soft launch on May 5. Similar to Wave 166, up to six calls were made by SSRS interviewers to ensure comprehensive outreach.

Weighting and Margin of Error

The weighting methodology for Wave 170 follows the established ATP protocols, ensuring that the data accurately represents the U.S. adult population. The larger sample size in Wave 170 resulted in a reduced margin of sampling error (±1.4 percentage points), allowing for more granular analysis and greater confidence in the findings. Comprehensive tables detailing sample sizes and margins of error for specific subgroups are available upon request.

Comparative Analysis and Broader Implications

The parallel execution of these two substantial data collection waves within a relatively short timeframe (Wave 166 in late March 2025 and Wave 170 in early May 2025) provides a valuable opportunity for researchers to track shifts in public opinion and sentiment over a critical period. The consistent application of the ATP’s robust methodologies across both waves ensures the comparability of findings, allowing for the identification of trends and emerging patterns in American attitudes.

The high response rates achieved in both waves are particularly noteworthy, especially in an era where survey fatigue is a growing concern. This sustained success underscores the effectiveness of the ATP’s recruitment strategies, its commitment to participant incentives, and the perceived value of its research by the American public. The inclusion of oversampling for key demographic groups in Wave 166 highlights the ongoing commitment to capturing the diverse voices within the nation, ensuring that the experiences of minority populations are not overlooked in broader societal analyses.

The detailed breakdown of methodologies, including sampling techniques, data collection protocols, and weighting procedures, is essential for transparency and for enabling other researchers and the public to critically assess the findings derived from these surveys. The information provided serves as a model for best practices in survey research, emphasizing precision, representativeness, and methodological rigor. As these data points are integrated into future Pew Research Center reports, they will undoubtedly contribute to a deeper understanding of the complex social, political, and economic landscapes shaping the United States. The meticulous attention to detail in each stage of data collection and analysis ensures that the insights generated from the American Trends Panel remain a trusted resource for policymakers, journalists, academics, and the general public alike.