The Pew Research Center’s American Trends Panel (ATP) has released detailed methodologies for two significant data collection waves conducted in early 2025: Wave 166, spanning March 24-30, 2025, and Wave 170, conducted from May 5-11, 2025. These comprehensive reports outline the rigorous processes employed to ensure the representativeness and accuracy of the data gathered from U.S. adults, forming the bedrock for numerous future analyses on societal trends and public opinion. The depth of detail provided underscores the Center’s commitment to transparency and methodological excellence in social science research.

Methodology for ATP Wave 166: March 2025

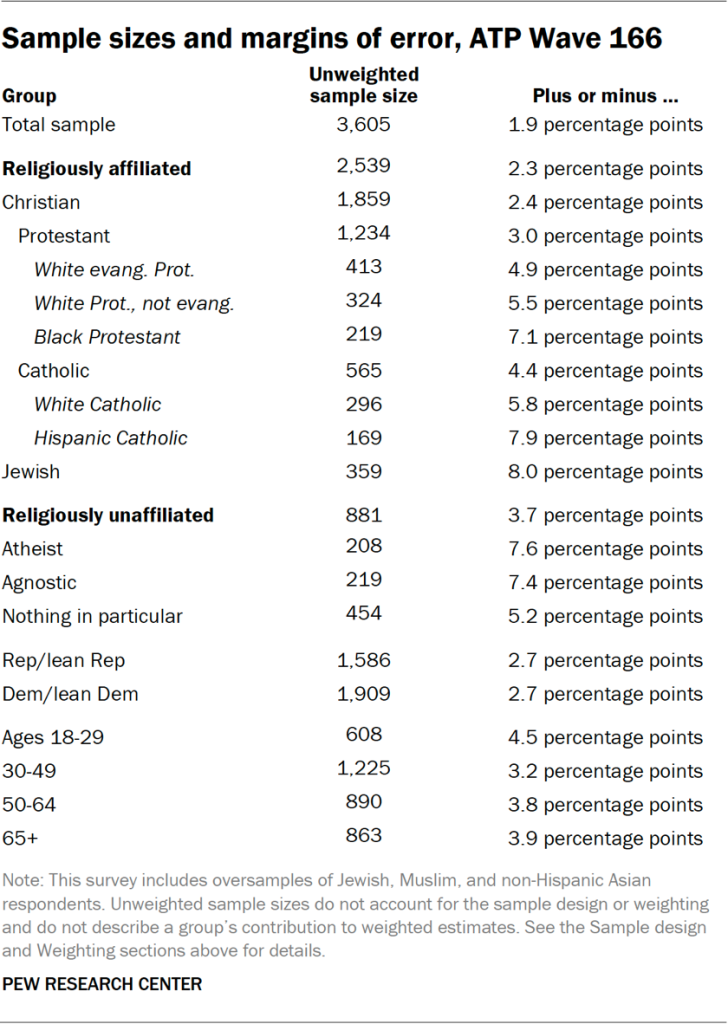

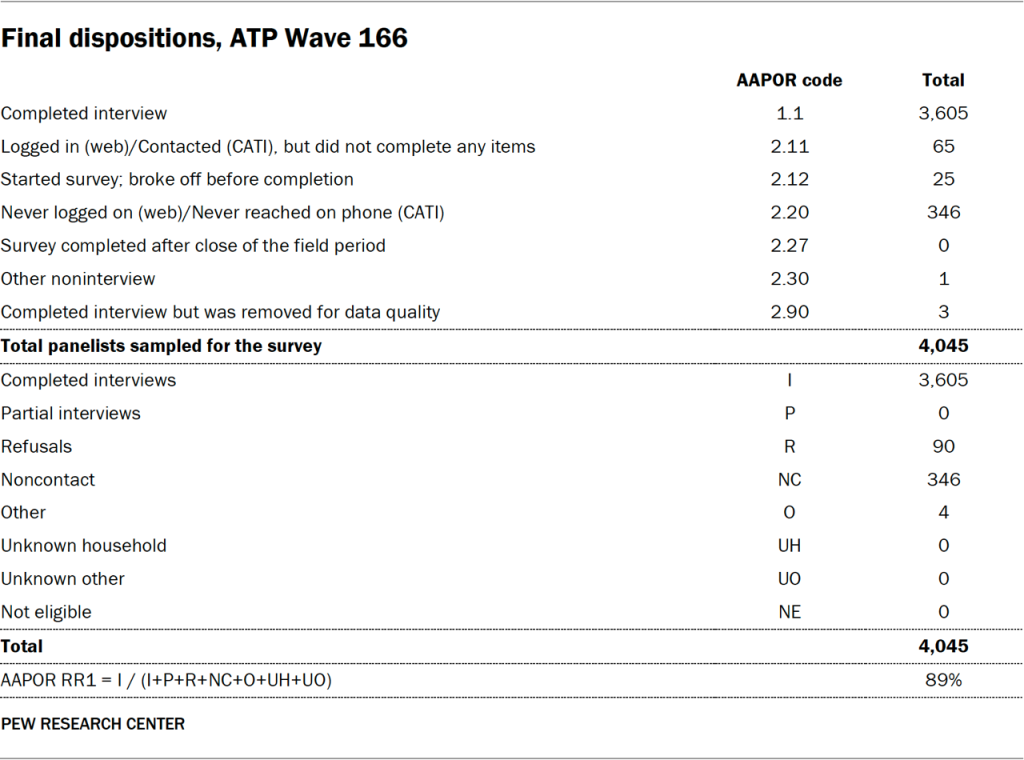

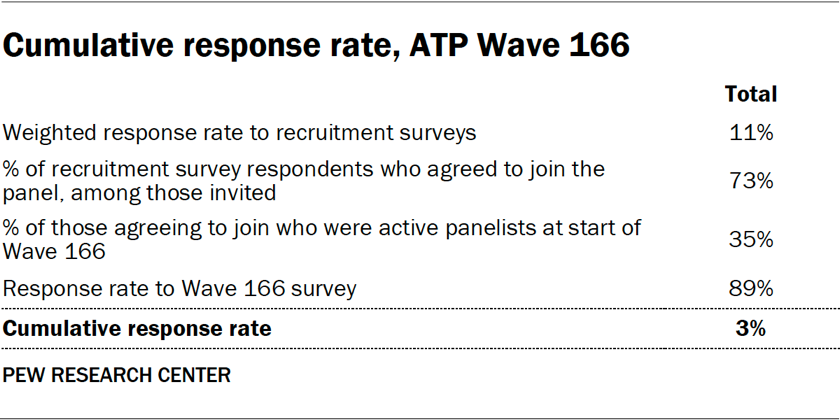

Wave 166 of the American Trends Panel, a nationally representative survey of U.S. adults, was conducted between March 24 and March 30, 2025. The survey achieved a robust survey-level response rate of 89%, with 3,605 panelists responding out of a sampled 4,045. This high response rate is a testament to the panel’s established recruitment and engagement strategies. The cumulative response rate, accounting for initial recruitment and ongoing attrition, stands at 3%. A minimal break-off rate of 1% among those who began the survey indicates a well-designed questionnaire and user experience. The margin of sampling error for the full sample in this wave is plus or minus 1.9 percentage points at the 95% confidence level.

To ensure precise estimates for smaller demographic groups, Wave 166 incorporated oversamples of Jewish, Muslim, and non-Hispanic Asian adults. These groups were deliberately targeted to allow for more granular analysis of their opinions and experiences. Crucially, the data from these oversampled populations is statistically weighted back to reflect their accurate proportions within the broader U.S. adult population, thereby preventing any distortion of overall findings.

The data collection for Wave 166 was managed by SSRS, utilizing a mixed-mode approach. Online interviewing accounted for the vast majority of responses (n=3,460), complemented by 145 interviews conducted via live telephone. Both English and Spanish were utilized to ensure accessibility and inclusivity for a diverse respondent pool.

Panel Recruitment and Sample Design

Since 2018, the ATP has relied on address-based sampling (ABS) for its panel recruitment. This method involves mailing study invitations and pre-incentives to a stratified, random sample of households selected from the U.S. Postal Service’s Computerized Delivery Sequence File, which covers an estimated 90% to 98% of the U.S. population. Within each selected household, the adult with the next birthday is invited to participate. Prior to 2018, the panel was recruited through random-digit-dial surveys conducted via landline and cellphone.

The ATP has consistently recruited national samples of U.S. adults annually since 2014. In specific years, targeted oversampling efforts have been implemented to bolster the representation of underrepresented groups, such as Hispanic, Black, and Asian adults in 2019, 2022, and 2023, respectively.

The sample design for Wave 166 targeted noninstitutionalized U.S. adults aged 18 and older. A stratified random sample from the ATP was employed, with Jewish, Muslim, and non-Hispanic Asian adults selected with certainty due to the oversampling strategy. The remaining panelists were selected at rates designed to maintain proportionality with their representation in the U.S. adult population. Respondent weights are subsequently adjusted to account for these differential selection probabilities.

Questionnaire Development and Data Collection

The questionnaire for Wave 166 was meticulously developed by Pew Research Center in collaboration with SSRS. The online survey platform underwent rigorous testing on both desktop and mobile devices by both the SSRS project team and Pew Research Center researchers to ensure functionality and data integrity. Test data was also analyzed to confirm the accuracy of survey logic and randomizations before deployment.

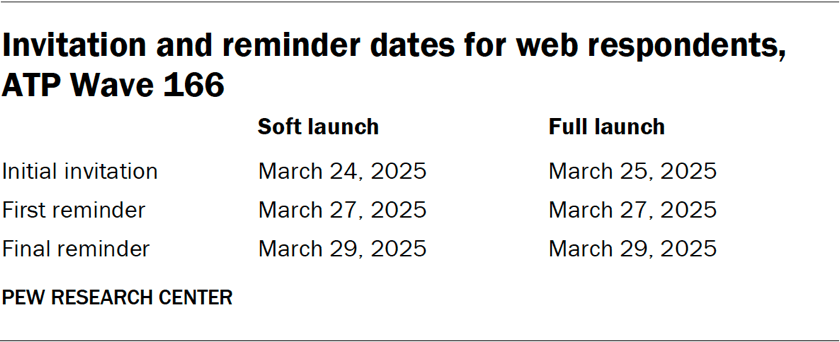

Data collection occurred over a seven-day period from March 24 to March 30, 2025. For online participants, postcard notifications were mailed on March 24, followed by a soft launch involving 60 panelists on the same day. A full launch for all remaining English and Spanish-speaking online panelists occurred on March 25, with up to two email reminders sent to non-respondents. Panelists who had opted into SMS notifications received an invitation and up to two text message reminders.

For telephone interviews, prenotification postcards were mailed on March 21. A soft launch on March 24 aimed to complete five interviews. Subsequent dialing for all remaining English and Spanish-speaking sampled phone panelists occurred throughout the field period, with interviewers making up to six calls.

Data Quality and Weighting

Pew Research Center researchers implemented stringent data quality checks to identify and address potential satisficing behavior, such as excessively high rates of unanswered questions or consistent selection of the first or last response option. Three respondents from Wave 166 were removed from the dataset based on these checks prior to analysis.

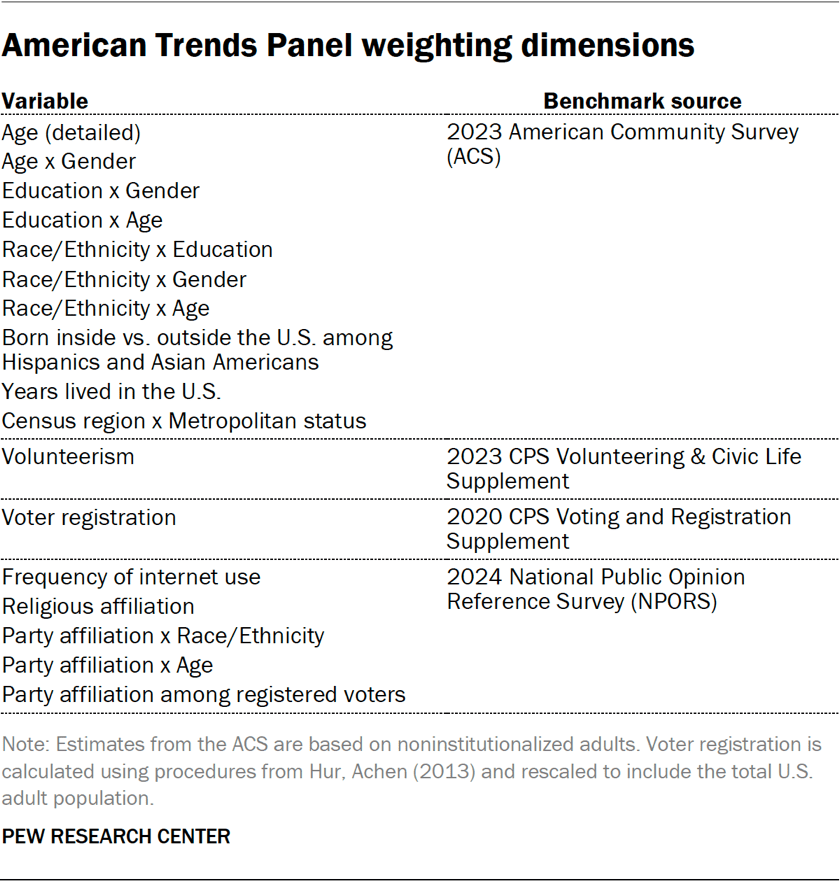

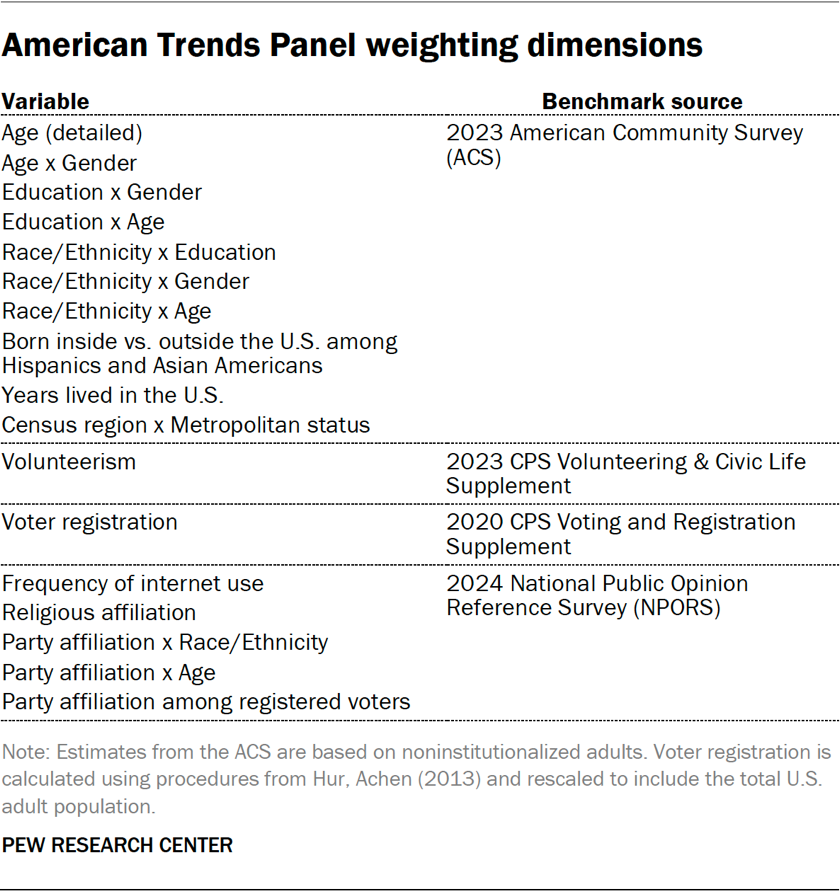

The weighting process for ATP data is multi-stage, accounting for sampling and nonresponse at various points. Each panelist receives a base weight reflecting their initial recruitment probability. These weights are then calibrated against population benchmarks to correct for nonresponse during recruitment and panel attrition. If a subsample of panelists was invited, the weight is further adjusted for differential selection probabilities. The final weights are calibrated against population benchmarks and trimmed at the 1st and 99th percentiles to mitigate the impact of extreme weights on precision. Sampling errors and significance tests incorporate the effect of this weighting.

The report includes tables detailing unweighted sample sizes and the margins of sampling error for various demographic groups, underscoring the statistical precision of the findings. It also acknowledges that, beyond sampling error, question wording and practical survey challenges can introduce bias.

Methodology for ATP Wave 170: May 2025

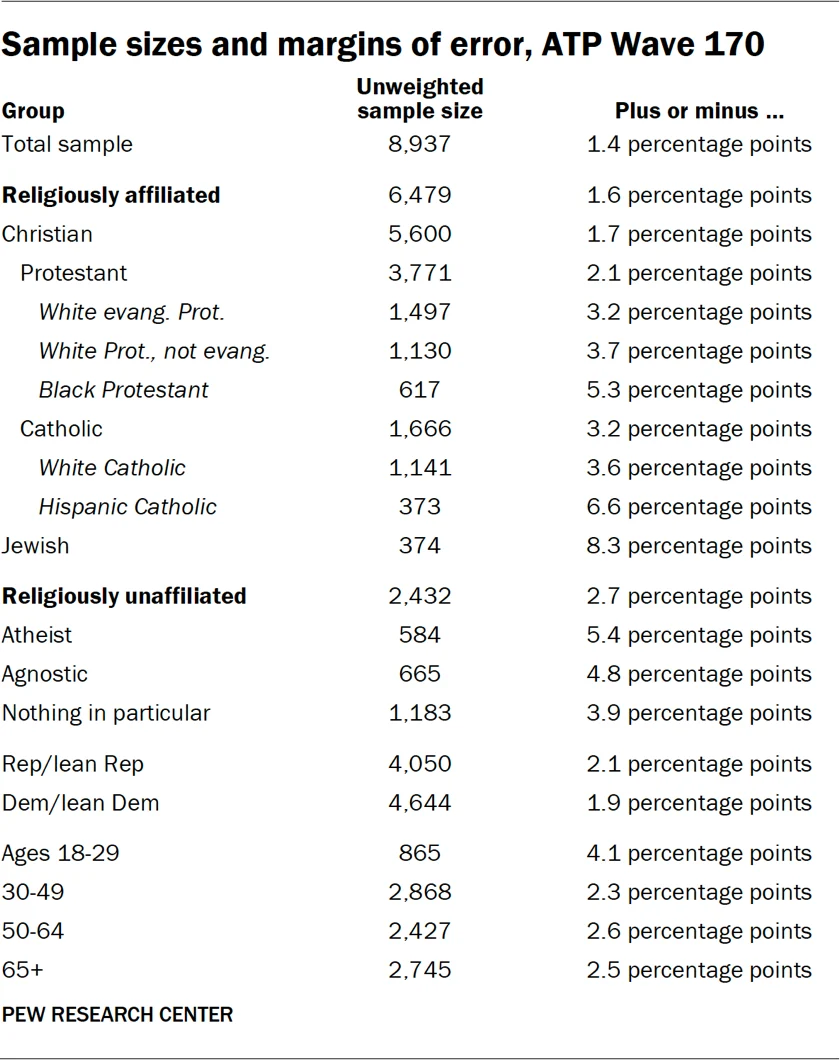

Wave 170 of the American Trends Panel, conducted from May 5 to May 11, 2025, represents another crucial data collection effort. This wave achieved an exceptionally high survey-level response rate of 94%, with 8,937 panelists responding out of 9,531 sampled. The cumulative response rate, accounting for recruitment and attrition, remains at 3%. The break-off rate was notably low, at less than 1%, indicating a highly efficient data collection process. The margin of sampling error for the full sample of 8,937 respondents in this wave is plus or minus 1.4 percentage points at the 95% confidence level, reflecting the larger sample size and improved precision.

SSRS again managed the data collection for the Center, employing a mixed-mode approach. Online interviews constituted the bulk of the responses (n=8,720), supplemented by 217 interviews conducted via live telephone. English and Spanish were used to ensure broad participation.

Panel Recruitment and Sample Design

The panel recruitment and sample design for Wave 170 mirrored the established protocols of Wave 166, leveraging the Address-Based Sampling (ABS) method and the ongoing efforts to maintain a representative national panel. All active ATP members who had successfully completed ATP Wave 162 were invited to participate in Wave 170, ensuring a consistent and engaged core of respondents.

Questionnaire Development and Data Collection

The questionnaire for Wave 170 was developed by Pew Research Center in consultation with SSRS and underwent rigorous testing. The data collection period spanned seven days, from May 5 to May 11, 2025.

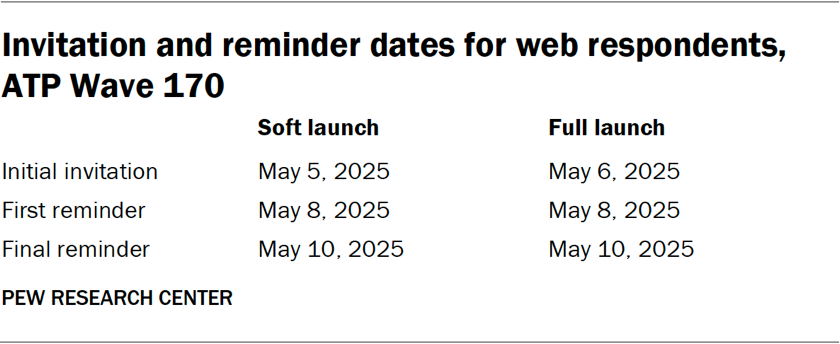

For online respondents, postcard notifications were sent on May 5. A soft launch involved 60 panelists on the same day, followed by a full launch for all remaining English and Spanish-speaking online panelists on May 6. Online participants received up to two email reminders if they did not complete the survey. Those who had consented to SMS notifications received an invitation and up to two text message reminders.

For telephone interviews, prenotification postcards were mailed on May 2. A soft launch on May 5 aimed to secure five completed interviews. Subsequent dialing efforts targeted all remaining sampled phone panelists throughout the field period, with interviewers making up to six calls.

Data Quality and Weighting

Data quality checks were performed for Wave 170 to identify and address any instances of satisficing behavior. Two ATP respondents were removed from the survey dataset based on these checks prior to weighting and analysis.

The weighting procedures for Wave 170 followed the established multi-stage process of the ATP. Base weights reflecting recruitment probabilities were calibrated against population benchmarks to correct for nonresponse and attrition. Adjustments were made for differential selection probabilities for any subsamples. The final weights were calibrated against population benchmarks and trimmed at the 1st and 99th percentiles to enhance precision. Sampling errors and significance tests account for the effects of weighting.

Detailed tables presenting unweighted sample sizes and margins of sampling error for various subgroups are provided, highlighting the statistical rigor of the data. The methodology also acknowledges potential sources of error beyond sampling, such as question wording and practical survey implementation challenges.

Comparative Analysis and Implications

The parallel methodologies for Wave 166 and Wave 170 demonstrate the Pew Research Center’s consistent commitment to methodological rigor and transparency. The slight variations in sample sizes and response rates between the two waves are typical for ongoing panel surveys and reflect the dynamic nature of panel engagement and data collection cycles. The significantly higher response rate in Wave 170 (94% vs. 89%) suggests potentially enhanced panel engagement or slight adjustments in recruitment strategies between the two periods. The larger sample size in Wave 170 (8,937 vs. 3,605) leads to a narrower margin of error (±1.4 percentage points vs. ±1.9 percentage points), allowing for more precise estimates of public opinion.

The consistent use of a mixed-mode data collection approach (online and telephone) is critical for capturing a broad spectrum of the U.S. adult population, acknowledging that different individuals have varying preferences and access to technology. The oversampling of specific religious and ethnic groups in Wave 166 underscores the importance of inclusive research that can speak to the experiences of diverse communities.

The detailed explanation of panel recruitment, questionnaire development, data collection protocols, and weighting procedures provides a robust framework for understanding the reliability and validity of the findings derived from these surveys. Researchers and the public can confidently utilize data from the ATP, knowing that it is grounded in scientifically sound methodologies designed to minimize bias and maximize representativeness. The ongoing efforts to refine and transparently report these methodologies position the Pew Research Center as a leading source for reliable social and demographic data.